Watch first: a current series-grade AI production benchmark

Before the trend breakdown, watch this trailer to calibrate against current audience expectations for cinematic AI filmmaking quality.

1) AI ad creation stacks are becoming multi-model by default

What changed this week: Adobe's guidance on partner model access in Firefly was refreshed on March 19, 2026, reinforcing that creators can route outputs through non-Adobe models inside the same workflow.

Why it matters commercially: AI advertising agency teams can choose the best model per shot type (style frame vs motion test vs polish pass), instead of forcing one model across all campaign stages.

Apply now: Split your next brief into three model lanes: concept stills, motion prototypes, and final render candidates. Score each lane independently before full generative video production.

Source: Adobe partner models guidance (updated Mar 19, 2026) →

2) AI agents for marketing are now localization engines, not just assistants

What changed this week: OpenAI's Descript case study (published March 6, 2026) is now a reference point across creator teams: one workflow localizing creator videos into many languages at scale.

Why it matters commercially: For AI video commercials, localization latency is becoming a growth bottleneck. Teams that translate/adapt quickly can test more regions before media spend scales.

Apply now: Add an "adaptation sprint" to your post pipeline: language dubbing, subtitle QA, and market-specific CTA swaps before distribution.

Source: OpenAI x Descript customer story →

3) Creator-native aesthetics are winning over "perfect" renders

What changed this week: Higgsfield's creator adoption metrics are now being widely cited in campaign planning: over 10 billion social views tied to its camera motion and style-control workflows.

Why it matters commercially: AI commercial production that feels "too clean" often underperforms in short-form feeds. Tasteful imperfection, tighter motion language, and format-native cuts are outperforming generic polish.

Apply now: Test a three-cut stack: high-finish brand cut, creator-native cut, and hybrid cut. Put 20% paid behind each before committing full budget.

Source: OpenAI x Higgsfield customer story →

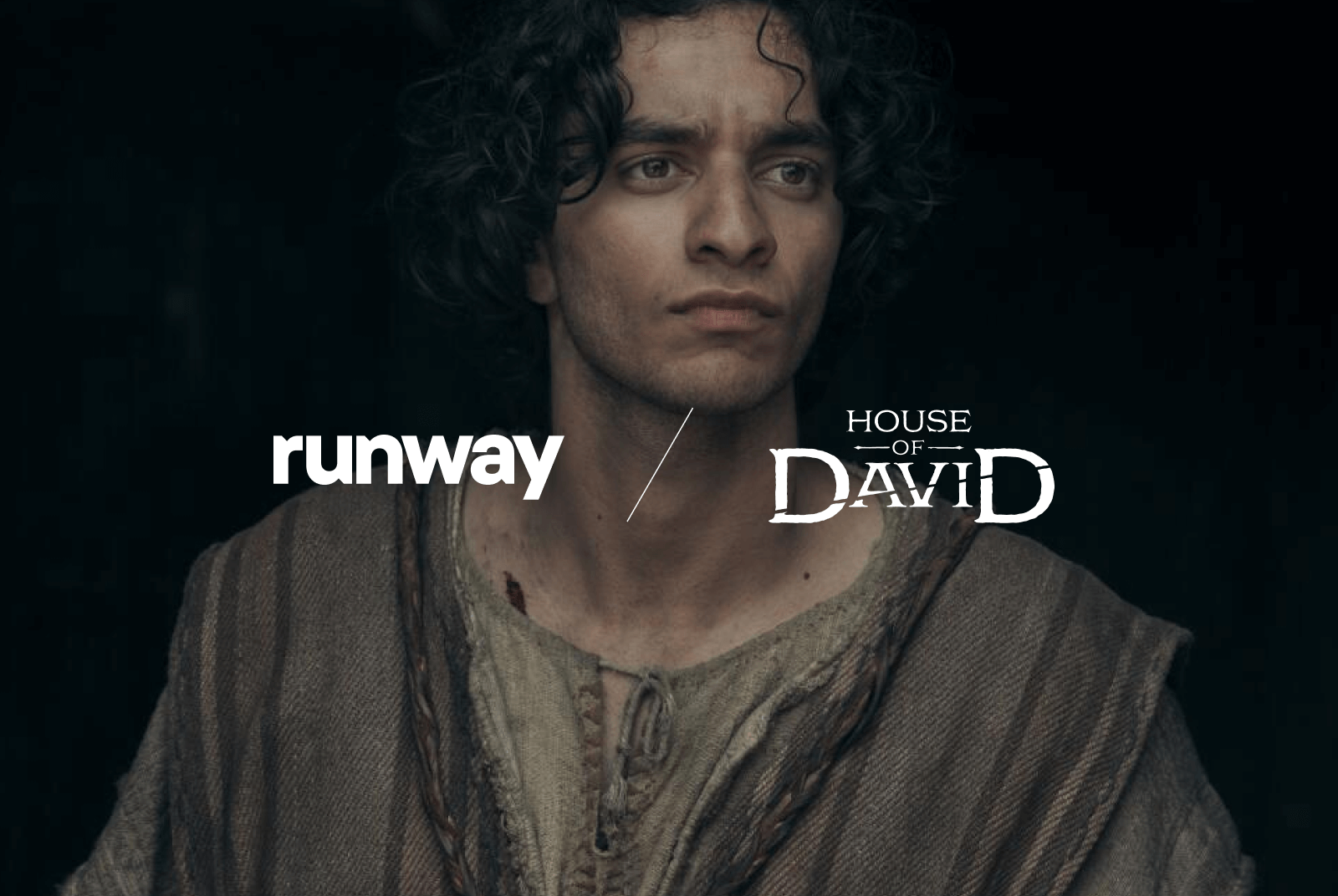

4) Generative video production is moving from ad tests to long-form pipeline support

What changed this week: Runway's current market signal is no longer only "viral clips". Their public case material highlights narrative workflow support in series production, including use across previs, shot ideation, and visual iteration.

Why it matters commercially: Brands commissioning episodic storytelling or campaign universes can now run AI support upstream, not only at the social cutdown stage.

Apply now: Run a dual-track pre-production sprint: traditional boards + AI shot lab. Approve only concepts that survive both narrative and execution constraints.

Source: Runway customer story (House of David) →

5) Distribution is shifting toward high-attention inventory for AI video commercials

What changed this week: Google's update on non-skippable placements for Video Reach Campaigns marks a practical shift in where AI ad creation outputs can run at scale, especially on connected TV.

Why it matters commercially: AI-generated assets are now expected to perform across both feed-native placements and premium lean-back formats. Creative strategy has to account for both contexts from day one.

Apply now: Produce every hero concept in two versions: short feed hook (6-15s) and narrative hold version for non-skippable inventory (15-30s).

Source: Google Ads update on VRC non-skippable inventory →

We build trend-to-output workflows for AI filmmaking and AI video commercials, including concept tests, model routing, and distribution planning.

Sources

- Adobe: Non-Adobe models in Adobe products (updated Mar 19, 2026)

- OpenAI customer story: Descript (Mar 6, 2026)

- OpenAI customer story: Higgsfield (Jan 21, 2026)

- Runway customer story: House of David

- Runway announcement: Introducing Runway Labs (Mar 11, 2026)

- Google Ads: VRC non-skippable inventory update

- Prime Video: House of David final trailer